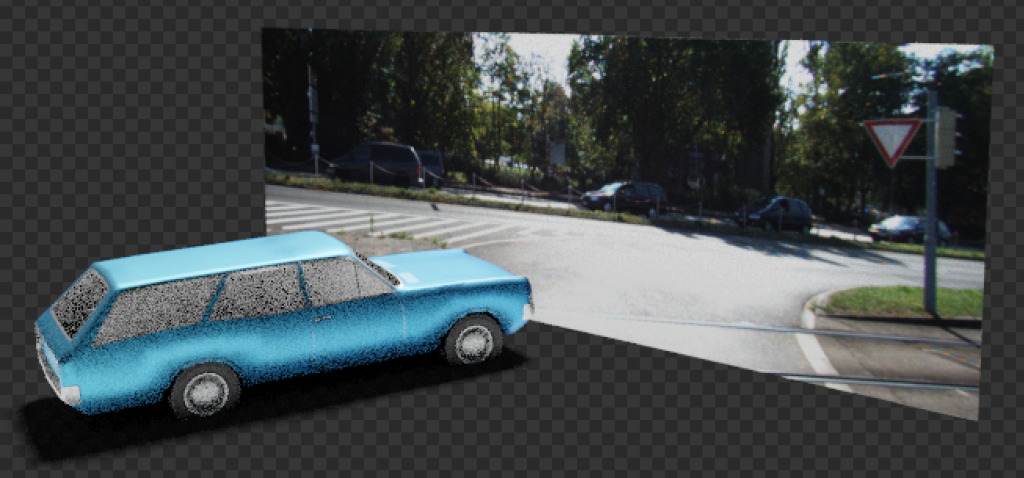

Trying to demonstrate how to do data augmentation on the KITTI stereo dataset, I found myself diving (too deeply) into Blender 3D.

What I set out to do is add a 3D object to an unsuspecting image from the KITTI dataset, to show that one way to get more training data is by synthesizing. I just put a 3D object in front of an image (say a 3D plane with the image as the texture) and done ✅ – I’ve augmented the image. Turns out it needs a bit more of Blender trickery. This is the real reason for this post.